OpenAI is back with another upgrade to ChatGPT’s image capabilities, and this one feels less like a gimmick and more like a serious step toward making AI visuals actually useful. OpenAI has officially introduced ChatGPT Images 2.0, a new image generation system that leans heavily into reasoning and accuracy.

ChatGPT Images 2.0 focuses on understanding, not just generating

Instead of blindly turning prompts into visuals, the model now takes a more deliberate approach, essentially “thinking” through what you’re asking before generating the image.

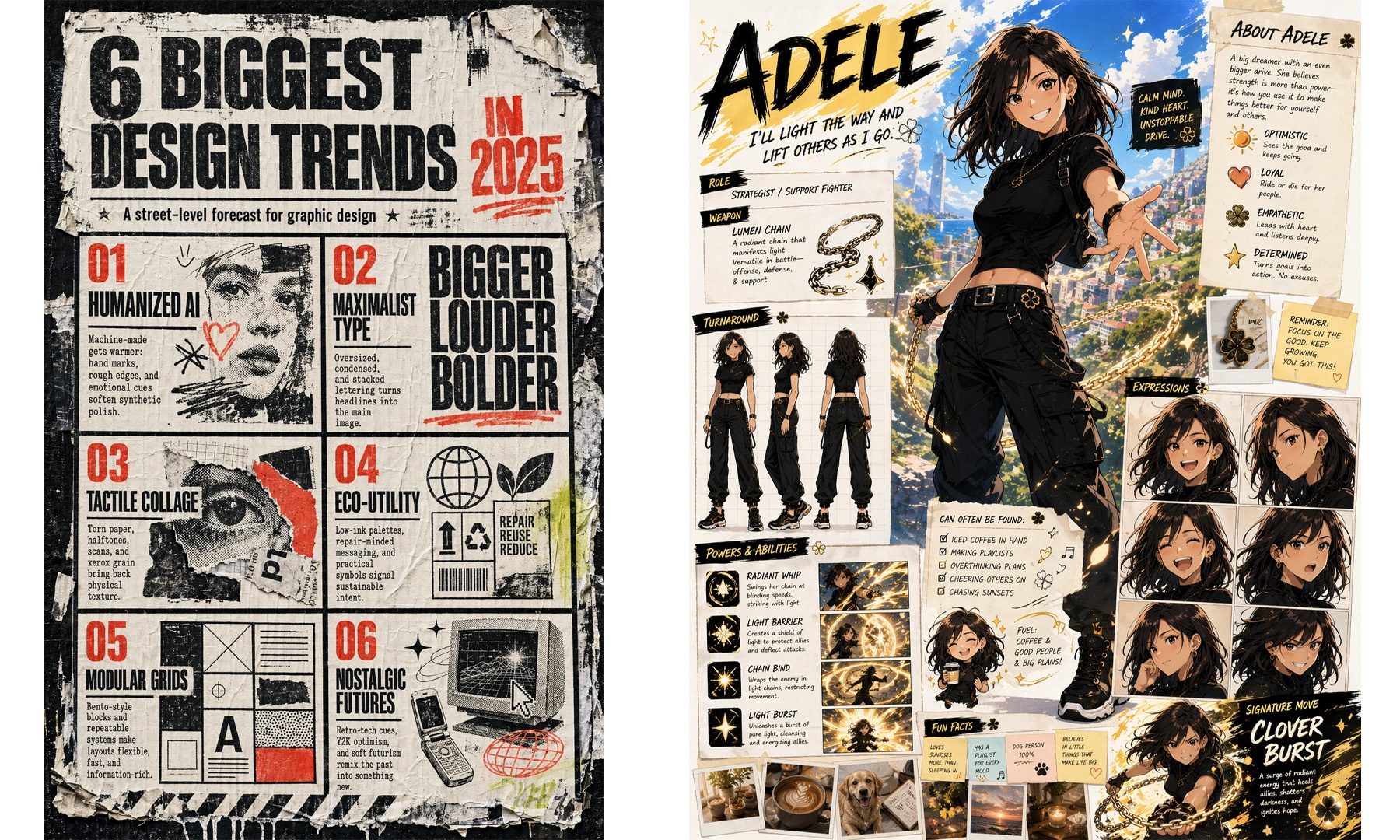

That shift shows up in a few key ways. The model is far better at handling complex prompts, can maintain consistency across multiple outputs, and is noticeably more reliable when it comes to placing text inside images, which is something earlier AI tools famously struggled with.

Furthermore, it can also generate multiple variations from a single prompt while keeping the core idea intact, which makes it far more useful for iterative work. The result is a system that feels less like an AI art generator and more like a tool that actually understands what you’re trying to create.

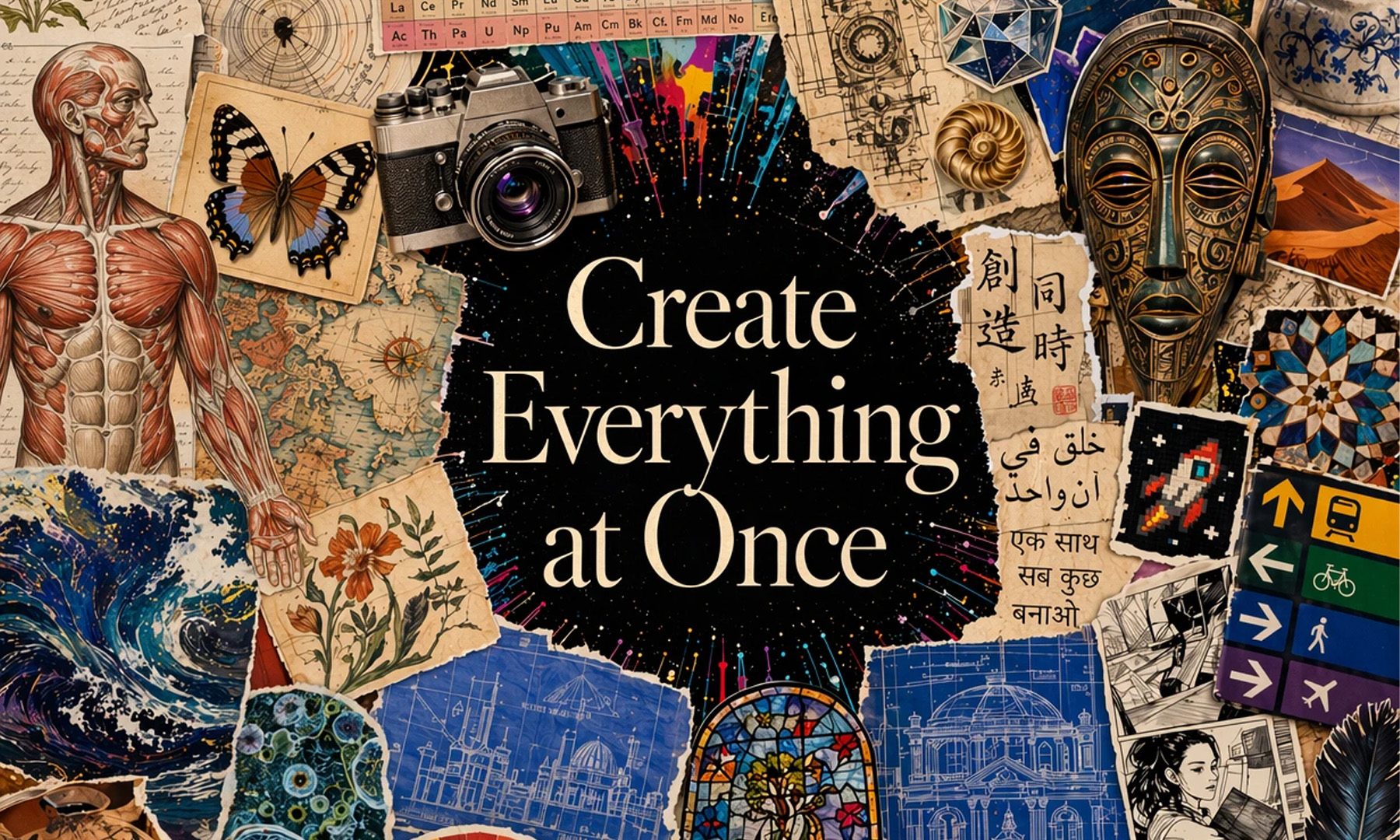

This is where AI images start becoming practical

What makes this update interesting is the direction OpenAI is taking. This isn’t about chasing viral AI art anymore, but also about making image generation usable in real-world scenarios. With improved text rendering, better structure, and more predictable outputs, ChatGPT Images 2.0 starts to make sense for things like presentations, social media creatives, or quick design mockups. It’s still not a full replacement for professional tools, but it’s getting close enough to handle a surprising amount of everyday creative work.

That said, it’s not perfect. There are still occasional inconsistencies, especially with more complex layouts or non-English text. But compared to where things were even a year ago, the progress is hard to ignore. And if this trend continues, the line between “AI-generated” and “actually usable” visuals is going to get thinner very quickly. ChatGPT Images 2.0 is available starting today to all ChatGPT and Codex users, with advanced outputs using Thinking available to Plus, Pro, Business, and Enterprise users. The underlying model, gpt-image-2, is also available in the API.