At a glance, the name SimSensei sounds like something that could virtually teach you to be the next Ninja master. Alas, that dream is too far-fetched; SimSensei is actually a Kinect-powered virtual therapist that uses the motion recognition technology to read a patient’s body language, thus determining underlying anxiety, nervousness, happiness, or contemplation.

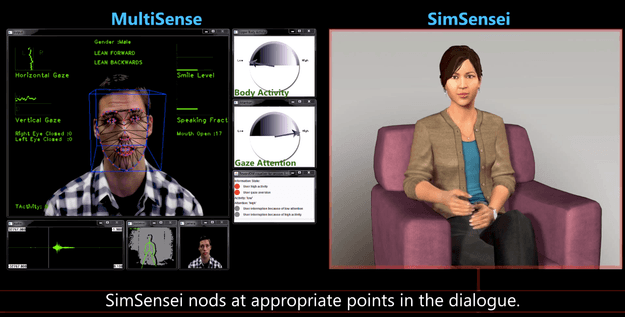

Developed by a team at the University of Southern California’s Institute for Creative Technologies, the SimSensei program is still in its early stages. The beta preview uses a virtual avatar that acts as your shrink, while the second half of the screen uses the Microsoft Kinect to detect changes in the patient’s face and body movements. SimSensei would only record the patient as he or she is verbally responding so the program could see how the patient physically reacts when they are answering questions. These body languages include patients leaning forward or backward on their chair, smiling, prolonged/lack of eye contact, and the directions in which the eyes move.

That’s not to say SimSensei is entirely robotic either. The virtual therapist is even programmed to “Hmm” at appropriate times as if to ponder a response, and guide the patient along their conversation depending on what he or she answers. The point is to make the program look and feel as natural as possible, but that’s also not to say SimSensei doesn’t sound kind of creepy. I may have not been to too many shrinks, but the sample session USC provided in the video below shows a conversation that lacks white noise, feels awkward, sounds a bit intimidating. We’re not sure what’s worse: Trusting a human stranger or a friendly…ish robot with your deepest anxieties.

SimSensei is part of the many programs being developed for the Association for Computing Machinery‘s contest. The competition would compare the programs to see which can most accurately diagnose a patient based on their body language, identifying the correct group of depressed patients from non-depressed ones. Hey, at least if you reveal all your deep, dark secrets to SimSensei or other virtual psychiatrists, you will never run the risk of bumping into them in the street or something equally embarrassing. Meanwhile, students studying psychology have another reason to go back to their dorm rooms and weep themselves to sleep tonight for having chosen a doomed career path. Good thing there’s a robot they can talk to that’s currently in development.