Want to know the name of that flower or bird you encounter during your stroll through a park? Soon, Google Assistant will be able to tell you, using the camera and artificial intelligence.

Google jump-started its 2017 I/O conference around AI and machine learning, and one computer vision technology it highlighted is Google Lens, which lets the camera do more than just capture an image — it gives greater context around what it is that you’re seeing.

Coming to Google Assistant and Google Photos, the Google Lens technology can “understand what you’re looking at and help you take action,” Google CEO Sundar Pichai said during the keynote. For example, if you point the camera at a concert venue marquee, Google Assistant can tell you more about the performer, as well as play music, help you buy tickets to the show, and add it to your calendar, all within a single app.

When the camera’s pointed at an unfamiliar object, Google Assistant, through image recognition, can tell you what it is. Point it at a shop sign, and using location info, can give you meaningful information about the business. All this can be done through the “conversational” voice interaction the user has with Assistant.

“You can point your phone at it and we can automatically do the hard work for you,” Pichai said.

With Google Lens, your smartphone camera won’t just see what you see, but will also understand what you see to help you take action. #io17 pic.twitter.com/viOmWFjqk1

— Google (@Google) May 17, 2017

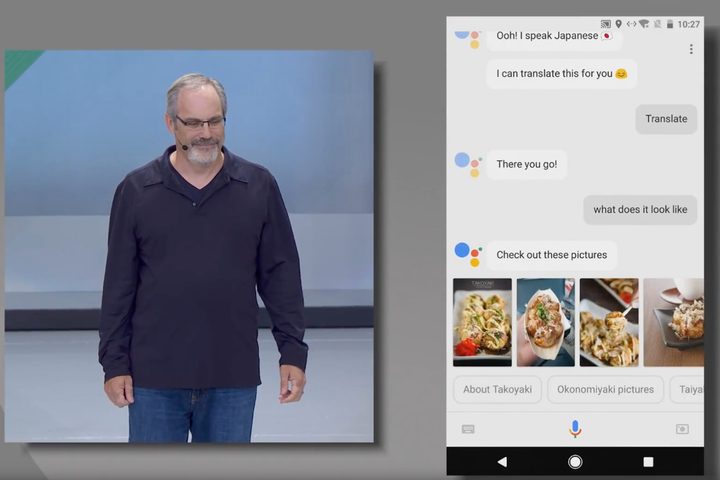

If you use Google’s Translate app, you have already seen how the technology works: Place a camera over some text and the app will translate it to a language you understand. In Google Assistant, Google Lens will take this further. In a demonstration, Google showed that Google Assistant not only will translate foreign text, but also display images of what the text is describing, to give more information.

Image recognition technology isn’t new, but Google Lens shows how advanced machine learning is becoming. Pichai said that as with its work on speech, Google is seeing great improvements in vision. The computer vision technology not only helps recognize what something is, but can even help repair or enhance an image. Took a blurry photo of the Eiffel Tower? Because the computer recognizes the object and knows what it’s suppose to look like, it can automatically enhance that image based on what is already knows.

“We can understand the attributes behind a photo,” Pichai said. “Our computer vision systems now are even better than humans at image recognition.”

To make Lens effective at its job, Google is employing sophisticated computational architecture of Cloud Tensor Processing Unit (TPU) chipsets, to handle training and inference for its machine learning. Its second-generation TPU technology can handle 180 trillion floating point operations per second; 64 TPU boards in one super computer can handle 11.5 petaflops. With this computing power, new TPU can handle both training and inference simultaneously, which wasn’t possible in the past (the previous TPU could only handle inference work, but not the more complex training). Machine learning takes time, but this hardware will help accelerate the effort.

Google Lens will also power the next update of Google Photos. Image recognition is already used in Photos to recognize faces, places, and things to help with organization and search. With Google Lens, Google Photos can give you greater information about the things in your photos, like the name and description of a building; tapping on a phone number in a photo will place a call, pulling up more info on an artwork you saw in a museum, or even enter the Wi-Fi password automatically from a photo you took of the back of a Wi-Fi router.

Assistant and Photos will be the first apps to use Google Lens, but it will be rolled out into other apps. And with the announcement of support for Assistant in iOS, iPhone users will be able to utilize the Google Lens technology as well.