Sony’s PlayStation 5 age verification rules are now taking effect in the UK and Ireland, where the rollout is currently in a pilot phase before full enforcement by June 2026. The company says adult accounts will need to verify their age to keep using communication, broadcasting, and some social features. Child safety is the stated reason, but the rollout has quickly turned into another argument about privacy, data collection, and how much policing online platforms should be allowed to do.

Your PS5 still works, but your social life may not

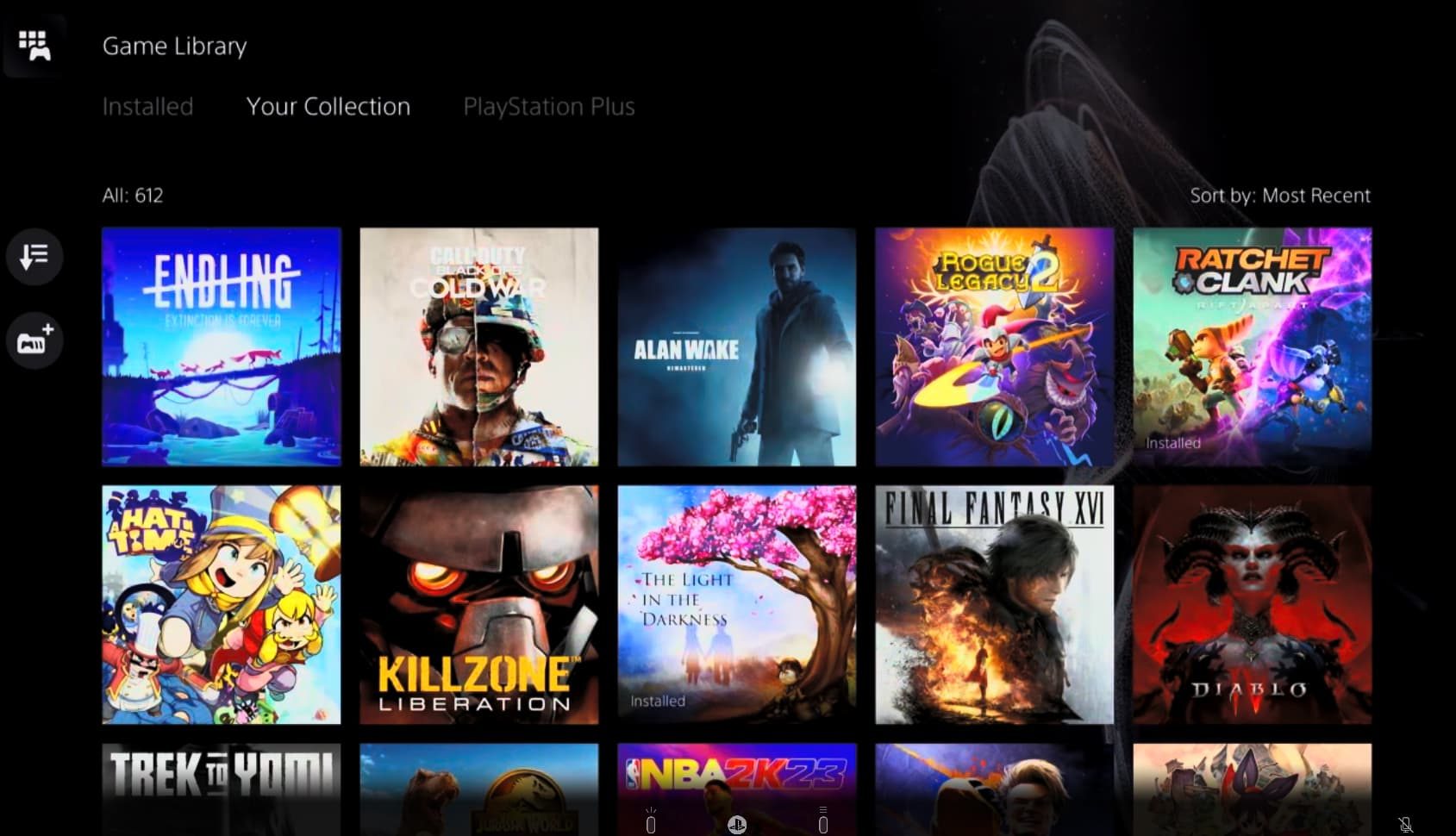

Sony says unverified users can still play games, use non-communication features, and buy content from the PlayStation Store. The restrictions apply to messaging, voice chat, text chat, joining parties or group sessions, Discord voice chat, broadcasting to YouTube or Twitch, and some in-game communication or user-generated content features.

The verification process can be completed through methods such as a mobile number check, facial scan, or government ID, with Yoti handling the process. Sony presents it as a one-time check, but the reaction online has been far less calm.

Across Reddit and X, users have complained about failed attempts, server errors, and the larger idea of needing to prove adulthood on an account they may have used for years. Some players are less worried about this single check and more worried about where it leads. Today it is party chat, tomorrow it could be wider access to games, communities, streaming, or purchases.

Discord already showed how messy this gets

The timing also makes Sony’s move harder to ignore. Discord recently faced a similar backlash over age verification rules, where users had to prove they were adults to keep full access to certain perks and age-restricted spaces. Discord later admitted it rushed parts of the rollout and began rethinking the process after user pushback.

PlayStation’s age verification appears to be part of a broader regulatory squeeze. The UK is pushing platforms through the Online Safety Act, Ireland falls under the EU’s Digital Services Act, and California has moved toward device-level age checks. Governments want stronger child safety controls, and companies are responding to that pressure. The concern is what users are being asked to give up in return. A phone number, face scan, or ID document is now becoming the price of keeping features that used to be normal parts of the service.

Making matters worse, Sony is also enforcing a 30-day DRM check on PS5. If the console stays offline for more than 30 days, some games may become inaccessible. The age check adds to the same concern. The PS5 would still run your games, but more of the PlayStation experience is starting to feel more dependent on verification, online checks, and account approval.