Autonomous vehicles are becoming more and more sophisticated, but concerns still abound about the safety of such systems. Creating an autonomous system that drives safely in laboratory conditions is one thing, but being confident in those systems’ ability to navigate in the real world is quite another.

Researchers from Massachusetts Institute of Technology (MIT) have been working on just this problem, looking at the differences between how autonomous systems learn in training and the issues that arise in the real world. They have created a model of situations in which what an autonomous system has learned does not match actual events that occur on the road.

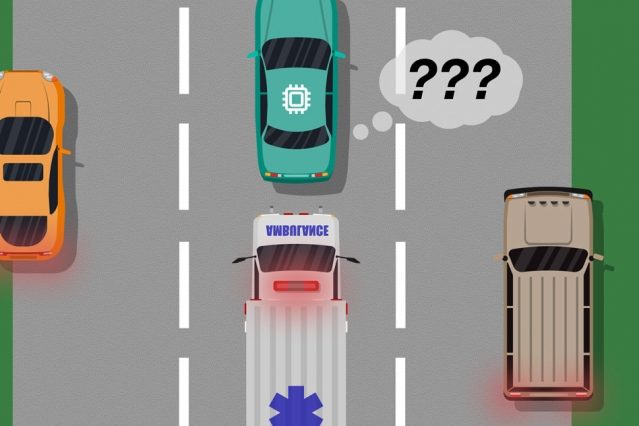

An example the researchers give is understanding the difference between a large white car and an ambulance. If an autonomous car has not been trained on or does not have the sensors to differentiate between these two types of vehicle, then the car may not know that it should slow down and pull over when an ambulance approaches. The researchers describe these kind of scenarios as “blind spots” in training.

To identify these blind spots, the researchers used human input to oversee an artificial intelligence (A.I.) as it goes through simulation training, and to give feedback on any mistakes that the system makes. The human feedback can then be compared with the A.I. training data to identify any situations where the A.I. needs more or better information to make safe and correct choices.

“The model helps autonomous systems better know what they don’t know,” author of the paper Ramya Ramakrishnan, a graduate student in the Computer Science and Artificial Intelligence Laboratory, said in a statement. “Many times, when these systems are deployed, their trained simulations don’t match the real-world setting [and] they could make mistakes, such as getting into accidents. The idea is to use humans to bridge that gap between simulation and the real world, in a safe way, so we can reduce some of those errors.”

This can also work in real-time, with a person in the driving seat of an autonomous vehicle. As long as the A.I. is maneuvering the car correctly, the person does nothing, but if they spot a mistake then they can take the wheel, indicating to the system that there is something that it missed. This teaches the A.I. in which situations there are conflicts between how it expects to behave and what a human driver deems safe and responsible driving.

Currently the system has only been tested in virtual video game environments, so the next step is to take the system on the road and test it in real vehicles.