Nvidia CEO Jensen Huang just wrapped his first GTC keynote of 2022, and during it, the executive announced Nvidia’s next-gen Hopper architecture. It’s launching in the H100, a powerful GPU restricted to data center use, but the announcement also holds some hints for the RTX 4080 and Nvidia’s next-gen consumer graphics cards.

Nvidia didn’t talk about the RTX 4080 at GTC, and it won’t use the Hopper architecture, at least based on rumors. A couple of years back, ahead of the launch of RTX 30-series graphics cards, rumors suggested that Nvidia would use the Hopper architecture for its RTX 40-series graphics cards. Now, it seems Nvidia will release two generations in 2022: Hopper for the data center and Ada Lovelace for consumers. But that doesn’t mean we can’t glean information from the announcements.

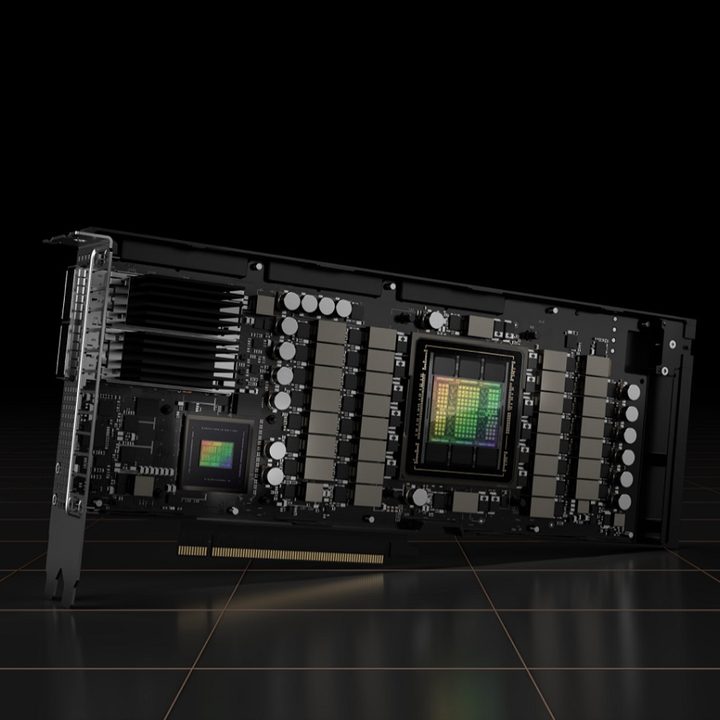

Dual architectures

This is only the second time Nvidia has split its consumer and data center products. Between Pascal and Turing, Nvidia introduced the Volta architecture for data centers. It was a bit of a stopgap, allowing Nvidia to move to a small manufacturing process on its data center products in preparation for the next generation of consumer products.

That changed with the RTX 30-series, where Nvidia unified both its product ranges under the Ampere architecture. All of this is to say, there isn’t a lot of precedent for what Nvidia is doing here. This is the first time we’ve truly seen two architectures from Nvidia live side by side.

For Hopper, we learned that it will use TSMC’s N4 manufacturing process and that Nvidia is targeting efficiency. What’s interesting is that Nvidia is rumored to use TSMC’s N5 process for the 4080, not the smaller and more efficient process that Hopper GPUs are using.

N5 and N4 live in the same family, but N4 is slightly more efficient than N5. Based off of the rumors we’ve seen about the massive power requirements for RTX 40-series graphics cards, N5 seems more likely for the consumer range. This builds on leakers suggestion that the RTX 4080 will have big problems with efficiency.

We could be seeing a repeat of the Pascal/Volta/Turing situation. Nvidia looks to be leading with Hopper, featuring a more efficient architecture, in order to set up the generation after the RTX 4080. It’s possible we move on to a smaller process by then, but it appears the consumer cards will still lag behind the data center ones.

The manufacturing process is the biggest development, but Hopper holds a couple of other clues, too.

NVLink interconnect

Nvidia focused on scalability with the the fourth generation of NVLink. This is an interconnect that’s only relevant today in Nvidia’s data center, but Huang announced that it’s coming to customers and partners.

With NVLink being open, Nvidia says the goal is to have other companies design semi-custom chips that work with Nvidia’s products. This could be relevant for Nvidia’s upcoming consumer graphics cards. Rumors suggest that AMD is going with a multichip module (MCM) design for RX 7000 graphics cards, essentially combining multiple separate compute clusters on a single chip.

Opening NVLink could lay the foundation for Nvidia to do something similar. Rumors suggest that AMD will, for the first time, leapfrog Nvidia with its RX 7000 graphics cards, and that could be due to the MCM design. It’s not clear if the RTX 4080 will use an MCM design, but the launch of Hopper suggests it won’t.

The last hint comes from the H100 CNX, which is a version of the H100 GPU that comes coupled with an Nvidia ConnectX-7 SmartNIC. This is to reduce latency and improve throughput to the GPU, eliminating CPU bottlenecks in servers.

That’s not relevant for a desktop GPU, but we may see a similar approach with the RTX 4080. Nvidia and IBM have teamed up to improve memory bandwidth and throughput by connecting an SSD directly to the GPU. We assumed this was a far-off bit of tech, but GTC suggests it could show up sooner rather than later.

Ultimately, though, the RTX 4080 is still a big question mark. We have leaks about performance and efficiency, as well as a few hints from Hopper, but we’ll have to wait until the card launches to learn everything about it. It’s currently rumored to launch this fall, though Nvidia hasn’t confirmed that timeline.