Google is bringing Gemini Intelligence to Android, which brings the best of Gemini to its most intelligent devices. The company really wants you to get your work done by Gemini throughout the day, all while staying in control and keeping your data private. Google is rolling out these features starting with the Samsung Galaxy and Google Pixel devices this summer. Furthermore, we’ll see these features on other Android devices, including watches, cars, glasses, and laptops, later this year.

Your assistant is about to get a lot more hands-on, without you having to ask twice

Google is clearly pushing Gemini beyond being just an assistant that answers questions. With what it calls Gemini Intelligence, the idea is to take over the kind of small, repetitive tasks that usually eat up your time. On devices like the Galaxy S26 and Pixel 10, Google has already been fine-tuning this across food delivery and ride-hailing apps, and the goal is to let the phone handle the boring steps while you stay focused on what you actually want to do.

What makes this interesting is how far it goes into real-life situations. Instead of bouncing between apps, Gemini can piece things together on its own. It could find your class syllabus in Gmail and automatically add the required books to your cart, or help you grab a bike for a spin class without you having to tap through multiple screens. It even understands visual context, so a grocery list or a travel brochure becomes something actionable. You can point it at a note or a photo, and it will try to turn that into a task, like building a shopping cart or finding a similar travel deal online. You stay in control the whole time, but the heavy lifting shifts to the background, which is really where this starts to feel less like an assistant and more like a quiet operator working for you.

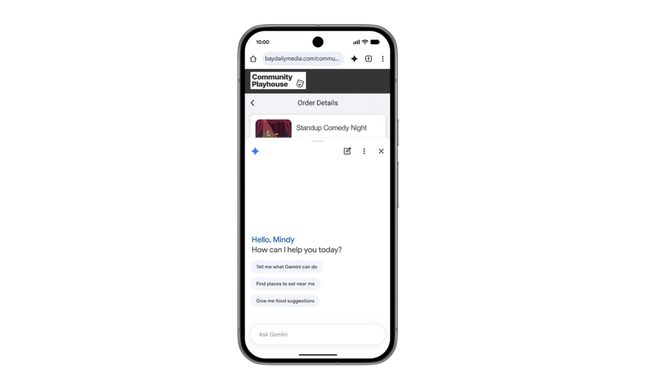

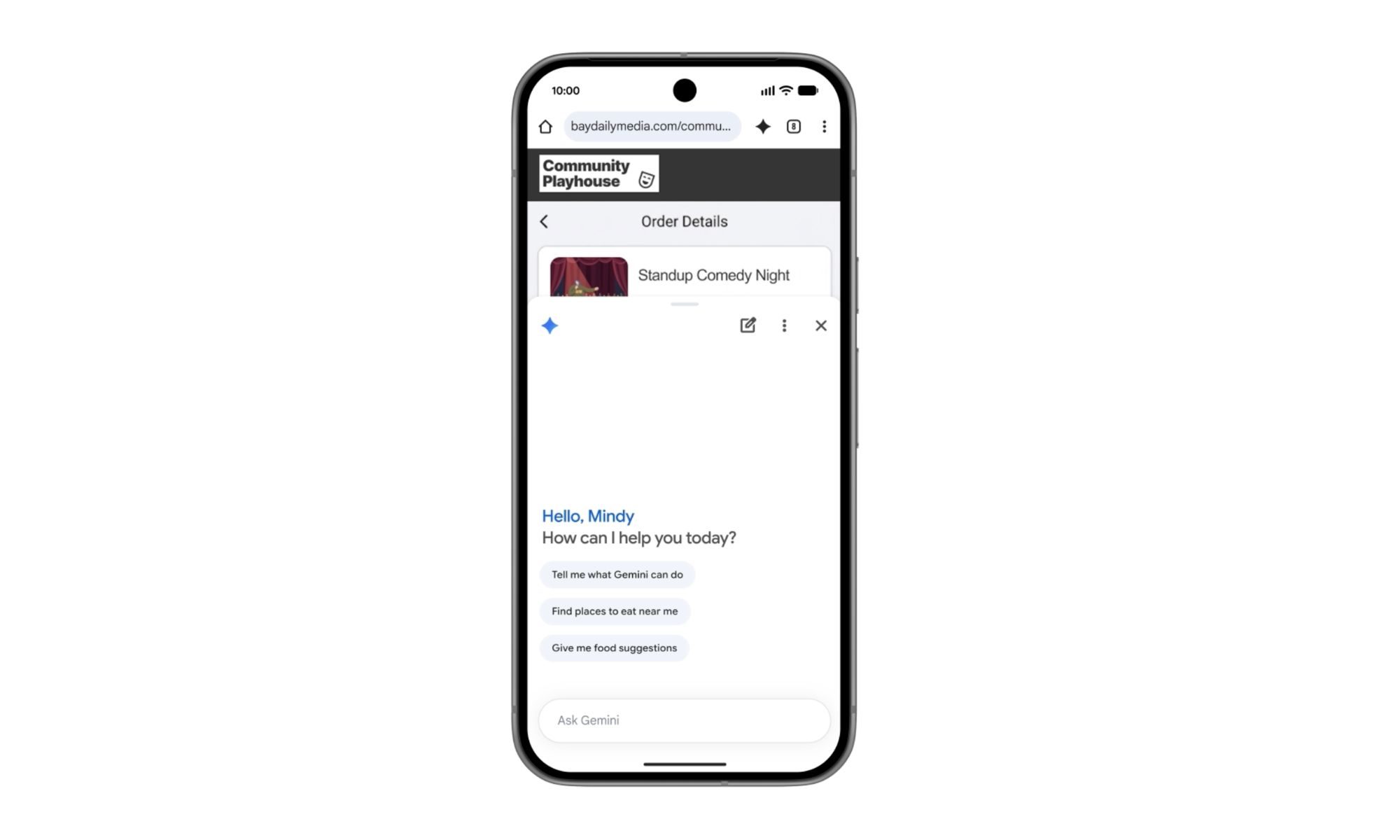

Chrome is about to do more than just open tabs for you

Starting in late June, Android users will see Chrome evolve into something far more capable than a regular browser. With Gemini built directly into Chrome, it will no longer just be about opening tabs and scrolling endlessly. Instead, it can actually help you make sense of what you are reading, pull out key points, and even compare information across different pages without you doing all the manual work.

What really stands out, though, is how far Google is willing to push this idea of a browser that acts on your behalf. With auto-browse, Chrome can take over some of the more tedious parts of everyday life online, like booking appointments or even sorting out parking reservations. It is one of those upgrades that sounds almost too convenient at first, but if it works as intended, it could genuinely change how much effort we spend just getting simple things done on the web.

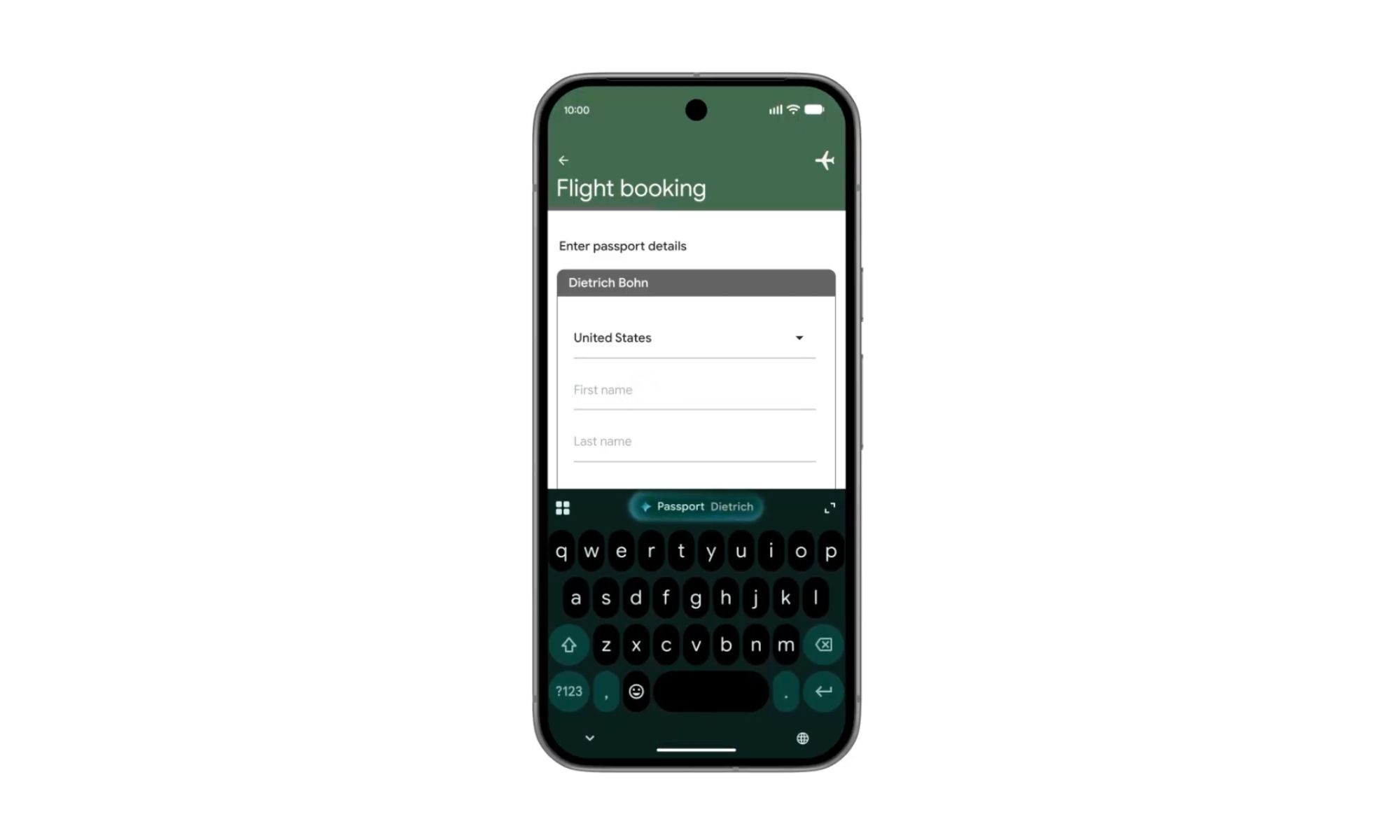

Your phone is about to get way better at filling in the blanks

Autofill on Android is finally starting to feel like it is growing up. What used to be a simple shortcut for names, emails, and passwords is now being upgraded into something much smarter with Gemini behind it. Instead of just remembering a few saved fields, your device can now understand context and pull in relevant information across apps, including Chrome, to help complete those annoying bits of text you keep typing over and over again.

The bigger win here is how it tackles something everyone hates: filling out long, messy forms on a phone screen. Whether it is address details, booking information, or repetitive sign-ups, Android can now lean on your connected apps to fill in the gaps for you. That said, Google is not forcing anything here. The Gemini-powered Autofill experience is fully opt-in, so you decide when it steps in, and you can switch it off anytime. It is a sensible approach, especially for something as deeply tied to personal data, but if it works as promised, it could make mobile form-filling feel much less painful than it does today.

From “ums” and “ahs” to surprisingly polished messages

Voice typing on Android has always been one of those features that is impressively useful in theory, but slightly messy in practice. Gboard already does a solid job of turning speech into text, but real human speech is rarely clean. We pause, repeat ourselves, throw in filler words, and change direction mid-sentence. Rambler, a new Gemini-powered feature, is Google’s attempt to fix exactly that gap between how we speak and how we actually want our messages to look.

Instead of forcing you to “speak perfectly,” Rambler takes a more forgiving approach. You can talk naturally, and it will intelligently pick out the meaningful parts, stitch them together, and turn them into a clean, readable message. It even handles multilingual conversations comfortably, which feels very real-world. Switching between English, Hindi, or a mix of both mid-sentence is no longer a problem, since it understands context and tone rather than just words. Google also says audio is processed in real time for transcription and is not stored, which should help ease privacy concerns. If it works as intended, this feels like having a very patient editor sitting inside your keyboard.

Your widgets are getting a very smart upgrade

Android widgets have always been one of those features people either love or forget about entirely, but Google is clearly trying to change that with Gemini Intelligence. With a new feature called Create My Widget, widgets are no longer just static blocks of information. Instead, they become something you actively shape using simple natural language, which honestly feels like the most Android thing Google could do with AI.

You can simply describe what you want, and Gemini builds a widget tailored to that need. It could be as specific as weekly high-protein meal suggestions for your fitness routine, or as stripped down as a weather view that shows only wind speed and rain for your cycling habits. The end result is a home screen that feels less like a default layout and more like something designed around your actual life. And because this extends to Wear OS as well, it is not just about your phone anymore, but about having the right information on your wrist at the right time.

A smarter Android wrapped in a more thoughtful design

Google is also giving Gemini Intelligence a visual identity that feels more intentional than anything we have seen before on Android. Built on top of Material 3 Expressive, the new design language is not just about making things look polished. It is about making the interface feel alive in a controlled way, with animations that guide your attention rather than fight for it. It aims to calm the chaos modern smartphones tend to create.

What ties all of this together is the bigger shift in how Android is being positioned. Gemini Intelligence is not just about adding AI features to existing tools. It is quietly reshaping how those tools look, behave, and respond to you. From handling repetitive tasks in the background to building interfaces that adapt to your needs, Google is clearly pushing toward a future where your device feels less like something you operate and more like something that works with you. And if it all comes together as intended, this could be one of those rare Android upgrades that actually changes daily use in a noticeable way.