The Mars 2020 rover is getting ready to join its sibling Curiosity on the surface of the planet, with scientists fitting vital instruments for the mission.

The rover will be heading to an area of Mars called the Jezero Crater, at the edge of an impact basin called the Isidis Basin. The Jezero Crater has been a target of scientific interest for some time, and was also in the running to be the exploration site of the Curiosity rover before the Gale Crater was chosen instead.

Now scientists will finally get the chance to gather data from the Jezero Crater. It is believed to be the site of an ancient lake, and it is even remotely possible that life could once have developed in this area. Even if, as is probable, there is no sign of life in the region, there likely will be minerals that form in the presence of water, which scientists can investigate for clues about the development of the lake over time.

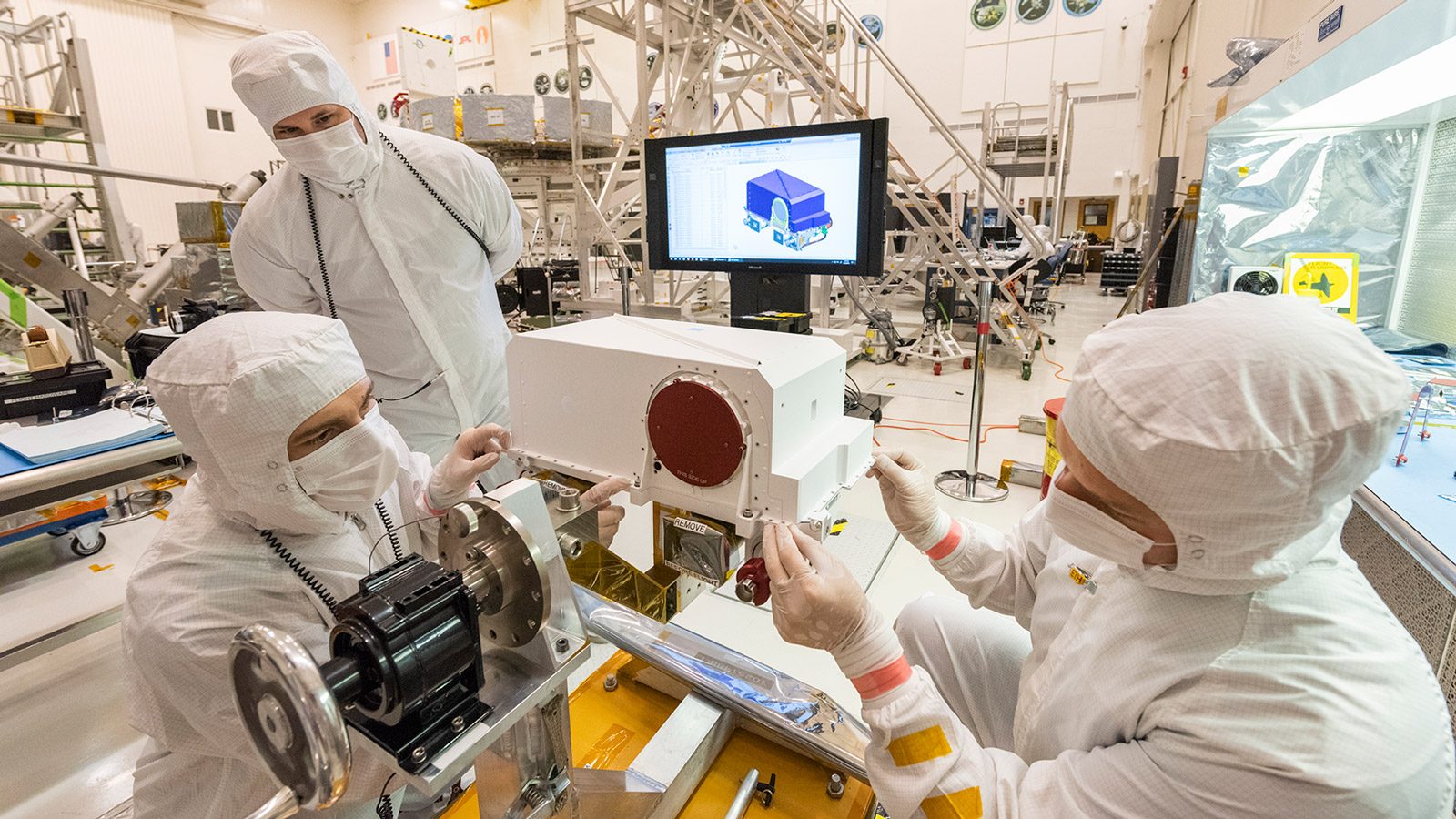

But in order to navigate and document the crater, the rover will need to collect visual information. For this purpose, it is being equipped with two high definition cameras called Mastcam-Z which will be installed this week. Mastcam-Z will be able to collect images in color, and well as having the ability to zoom. This will help scientists to observe the mineralogy and structure of the rocks and sediment on Mars.

“Mastcam-Z will be the first Mars color camera that can zoom, enabling 3D images at unprecedented resolution,” Mastcam-Z Principal Investigator Jim Bell of Arizona State University said in a statement. “With a resolution of three-hundredths of an inch (0.8 millimeters) in front of the rover and less than one-and-a-half inches (38 millimeters) from over 330 feet (100 meters) away — Mastcam-Z images will play a key role in selecting the best possible samples to return from Jezero Crater.”

For now, the camera is covered with a lens cover (the round red object in the photo above) while it is fitted to a mast called the remote sensing mast (RSM). The RSM will be raised once the rover touches down on Mars to get a view of the environment.