Our hands are pretty great at picking up all manner of objects, while our brains are fine-tuned at working out exactly where and how to pick up an object most securely. That’s not easy for a robot, however. Faced with a world full of strange-shaped objects to pick up and manipulate, there’s no easy way of programming a robot to be able to know the precise grip it should employ to deal with every single object it might encounter.

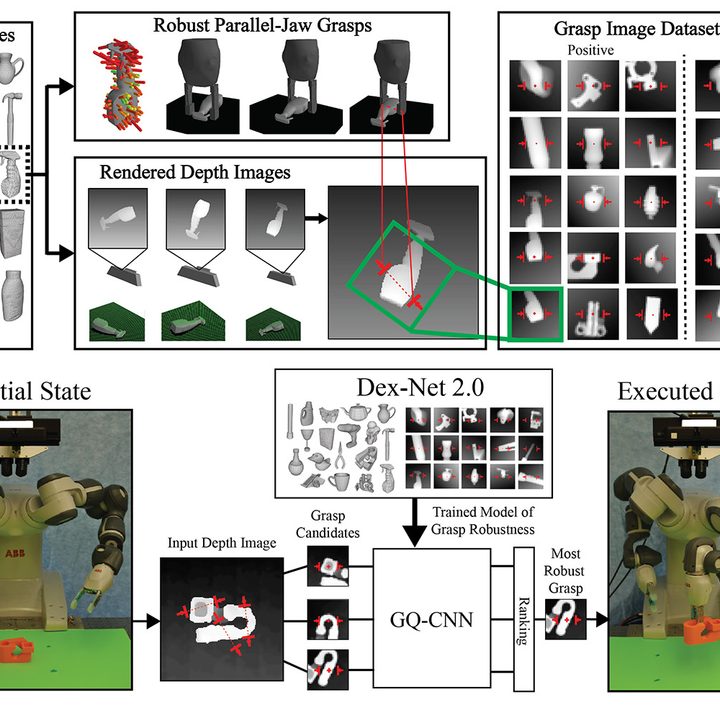

That’s where researchers from the University of California, Berkeley come into play. They’ve developed a system called DexNet 2.0 that works out how to perform this task not by endlessly practicing in real life, but by analyzing the objects in virtual reality — courtesy of a deep learning neural network.

“We construct a probabilistic model of the physics of grasping, rather than assuming the robot knows the true state of the world,” Jeff Mahler, a postdoctoral researcher who worked on the project, told Digital Trends. “Specifically we model the robustness, or probability of achieving a successful grasp, given an observation of the environment. We use a large dataset of 1,500 virtual 3D models to generate 6.7 million synthetic point clouds and grasps across many possible objects. Then we can learn to predict the probability of success of grasps given a point cloud using deep learning. Deep learning allows us to learn this mapping across such as large and complex dataset.”

The most obvious application for DexNet would be to improve robots used in warehousing or manufacturing by enabling them to cope with new components or other objects, and be able to manipulate them by packing them into boxes for shipping or performing assemblies. However, as Mahler points out, the technology could also help improve the capabilities of home robots — such as those that can clean up items or be used for assistive care, such as bringing items to elderly folks who can’t otherwise reach them.

There’s still more work to be done, though. “The big thrust of research in the next year is related to having the robot grasp for a particular use case,” Mahler said. “For example, orienting a bottle so it can be placed standing up or flipping legos over to plug them into other bricks.”

Other specifics on the agenda include the ability to grasp objects in clutter and reorienting objects for assembly. The team also plans to release the necessary code to let users generate their own training datasets and deploy the system on their own parallel-jaw robot. This will take place later in 2017.

“We have some interest in commercialization, but are primarily interested in furthering research on the subject in the next 6-12 months,” Mahler concluded.