The humble mouse pointer has barely changed in decades. It moves, clicks, selects, drags, and occasionally turns into a spinning wheel of frustration. Google now wants to turn that tiny arrow into one of the most powerful AI tools on your laptop, which sounds ridiculous until you think about how often you use it.

The company has announced Magic Pointer for Googlebook, its new category of Gemini-powered laptops. The feature gives the cursor AI abilities, allowing it to understand what you are pointing at and help you act on it without needing a long prompt or a separate chatbot window.

Can the cursor become the new AI button?

In a new DeepMind post, the company explained how it is rethinking the pointer for the AI era. The idea is to make Gemini understand the exact part of a webpage, image, table, document, or video frame the user is referring to. That turns the cursor from a basic navigation tool into a kind of AI remote control for the entire screen.

This is where the whole thing starts to sound wonderfully absurd. A pointer could turn a table into a chart, compare products you select on a webpage, summarize a PDF into bullets for an email, or identify a building in a photo and pull up directions. The cursor, once used mainly to click tiny buttons, is suddenly being asked to understand context, intent, and action.

Why does this matter for Googlebooks?

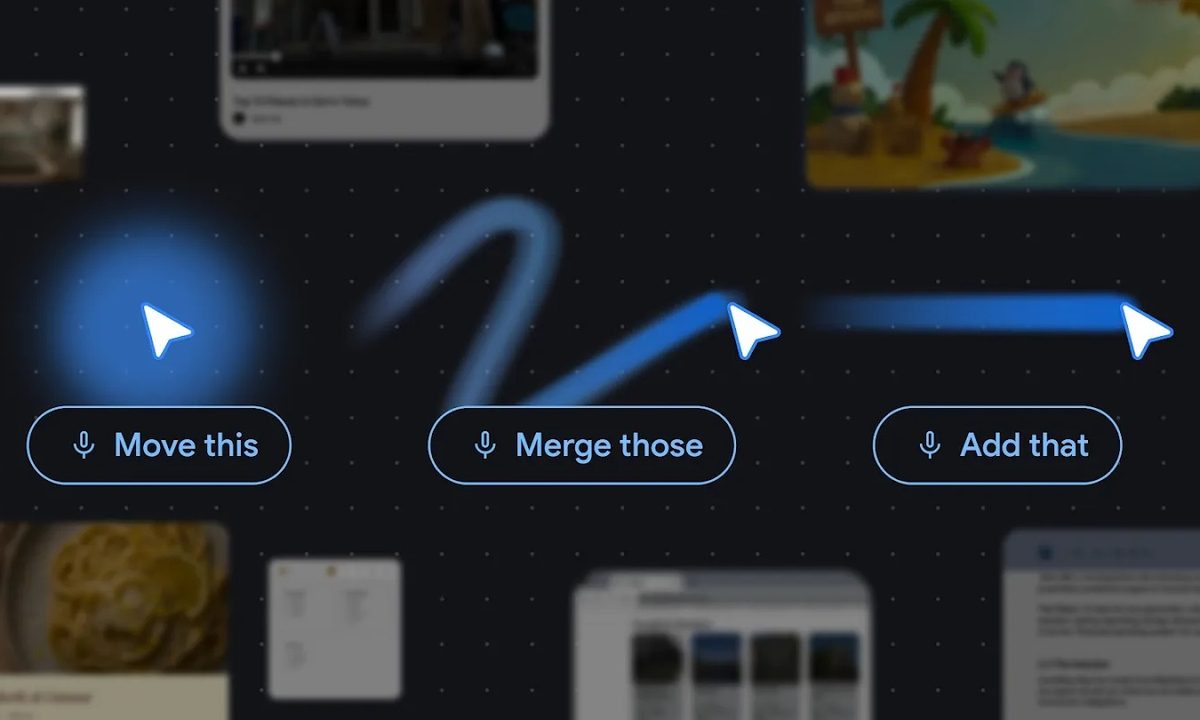

Google has taken inspiration from the way people already communicate offline. You usually do not describe every object in a room before asking someone to move it. You point and say, “move this” or “fix that.” Magic Pointer brings that same idea to the screen. The cursor tells Gemini what you are referring to, while short commands such as “add this,” “merge those,” or “what does this mean?” tell it what action to take.

This new feature will be deeply integrated into Googlebook laptops, as Magic Pointer is being announced as part of that platform. That means Googlebook users should be able to use it more freely across the laptop experience, instead of being limited to a single app or browser window.

For everyone else, this AI pointer will be limited to Gemini in Chrome for now. Google says users can point to specific parts of a webpage and ask questions, such as comparing multiple selected products, summarizing technical specs from a product listing, or instantly converting prices into a different currency.

If Magic Pointer works well, everyday AI tasks may no longer need a prompt box at all.