As recent University of Iowa graduate Zach Arenson will tell you, the learning curve for an average 3D modeling software is anywhere from several hundred hours to weeks. But with a new tool from Hungarian start-up Leonar3do, these hours dropped to just a few days. I know what you’re thinking: It’s obvious the company paid this kid to come boast about the product and how wonderful it makes his life. But after my hands-on demo, it was only a few minutes before I was drawing my own (unintentionally phallic) version of the Death Star.

The magic comes in two parts: The 3D modeling software and the physical tool, the Bird. The Bird is a tripod-esque pen that allows users to drag and shift the 3D model, pinching and pulling until they manipulate the object into their desired shape. A combination of three line sensors attached to the monitor and 3D glasses also allows the user to look around the virtual object, making it seem like the item is right in front of you. If you tilt your head to the left, you can see the side of the object all the way around to the back.

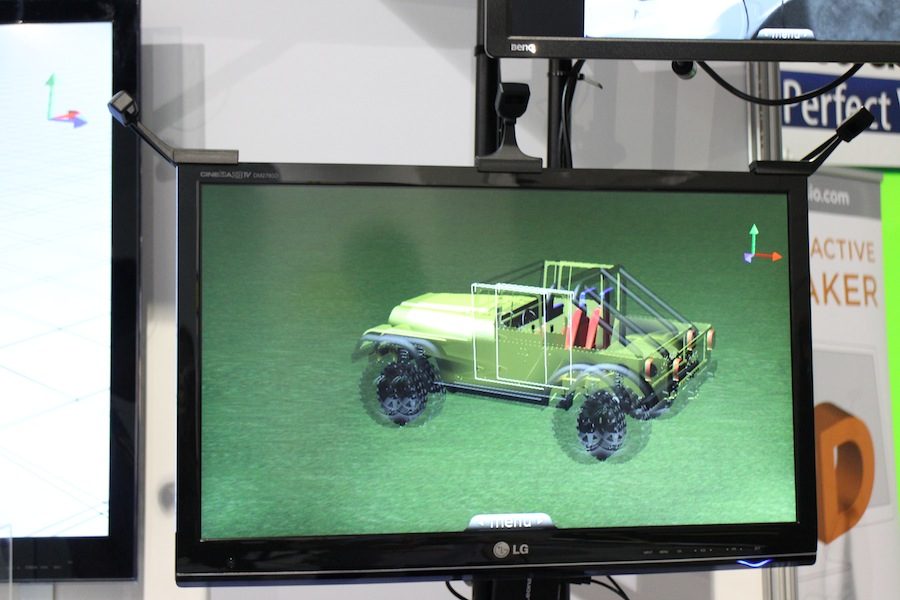

The fascinating aspect of this Leonar3do technology is how intuitive the experience feels. Arenson is right in that the learning curve is extremely small; if you already have some working knowledge of Photoshop, you’ll come to find the brush stroke percentage and sizing tools rather familiar. However, Leonar3Do representative Ronald Manyai also said the company held a contest at a local school and students were able to model a 3D car in just one weekend.

There are many ways one can toy around with the Leonar3do technology: You can buy the tool kit which comes with the Bird pen, triangulation sensors, and 3D googles, purchase just the software, or download the app and use your mobile phone as the main 3D controller. In the last case, you would hover the phone in front of the monitor and use the buttons on your phone’s screen to shapeshift your virtual 3D sculpture.

It’s a fun tool for both designers and educators to help students learn the basics of 3D modeling and logic even without an ounce of programming or architectural knowledge. At a cost of $2,000 per software, it’s not a completely unrealistic price point for classrooms across America but Manyai says a cheaper $50 version will launch in the coming months for those looking to experiment. The accompanying app will come to both the App Store and Google Play in March.

Here’s a demonstration video from Leonar3do showcasing a much more appealing model than my tentacle planet.