Like a pair of sneakers someone’s wearing? Or maybe a dress? There are quite a few apps and services — like Amazon’s Firefly or Samsung’s Bixby Vision — that let you simply point your smartphone camera at the object and search for it, or similar styles. Google is following suit with a similar feature in Google Lens, but it has the potential to reach far more people.

Google Lens is currently built into the Google Assistant on Android phones, as well as Google Photos. It lets you point the smartphone camera at objects to identify them, teach you more about landmarks, recognize QR codes, pull contact information from business cards, and more. At its annual Google I/O developer conference, the search giant announced four new improvements to Lens, and we got to try it out.

Built into camera apps

Google Lens is now built into the camera app on phones from 10 manufacturers: LG, Motorola, Xiaomi, Sony, Nokia, Transsion, TCL, OnePlus, BQ, Asus. That is not including Google’s very own Google Pixel 2. You are still able to access it through Google Assistant on all Android phones.

We got a chance to try it out on the recently announced LG G7 ThinQ, and the new option sits right next to the phone’s Portrait Mode.

Style Match

The biggest addition to Lens in this I/O announcement is Style Match. Like Bixby Vision or Amazon Firefly, you can point the smartphone camera at certain objects to find similar items. We pointed it at a few dresses and shoes, and were able to find similar-looking items, if not the exact same item. Once you find what you’re looking for, you can purchase it if available directly through Google Shopping.

It’s relatively quick, and an easy way to find things you can’t quite write into the Google Search bar.

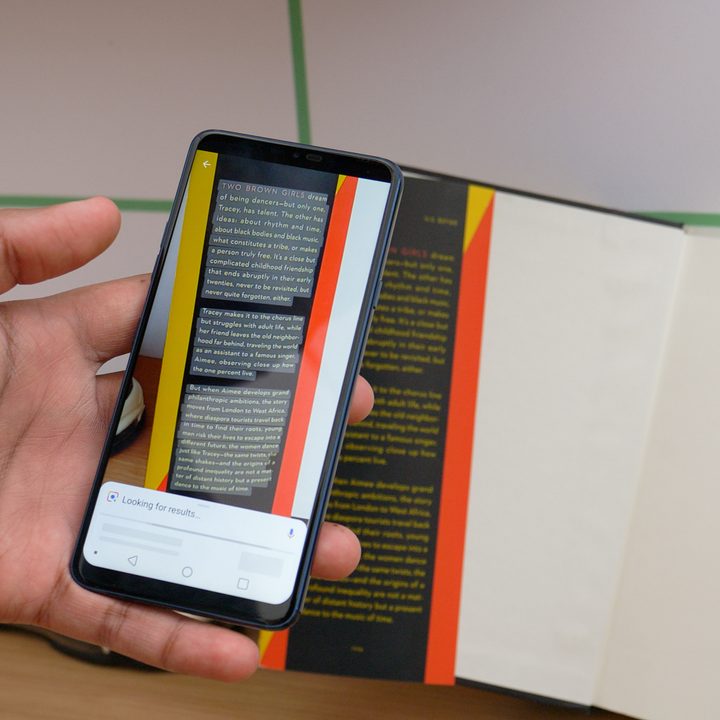

Smart text selection

Perhaps even more useful is Smart Text Selection. Point Google Lens at text, say like from a book or a menu, and it can single out the text from everything else. You can then tap on the text and copy it or translate it. When we tried it, Lens managed to grab an entire three paragraphs of text, though we’d have to do more testing to see how well it can pick up handwritten text.

Real time

Google Lens now works in real time, so you don’t need to pause and take a photo for it to understand the subject. That means you can point it at several things and you will see it creating colored dots on the objects it grabs information for. Google said it is identifying billions of words, phrases, and things in a split second all thanks to “state-of-the-art machine learning, on-device intelligence, and cloud TPUs.”

Google said it will be rolling out all of these features toward the end of May.