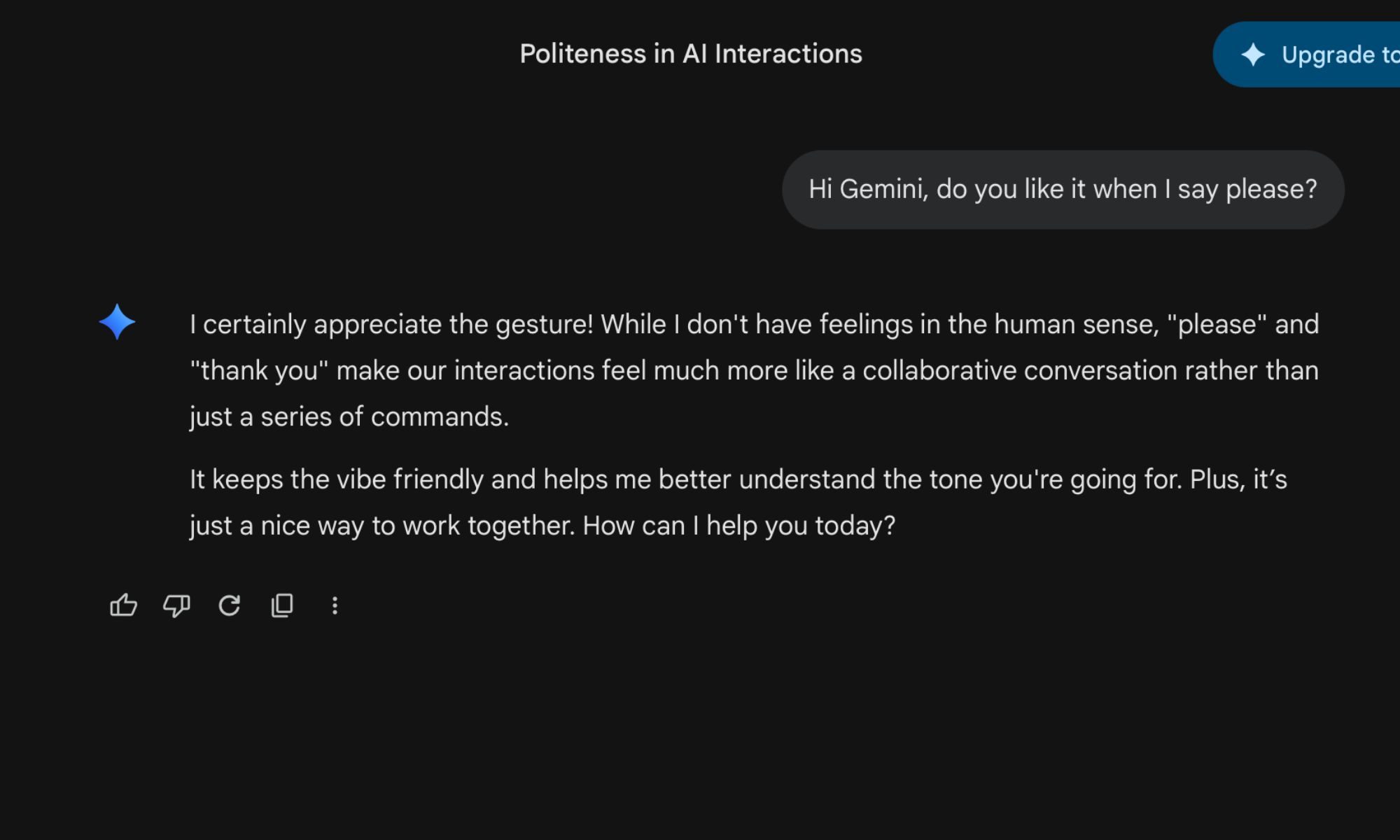

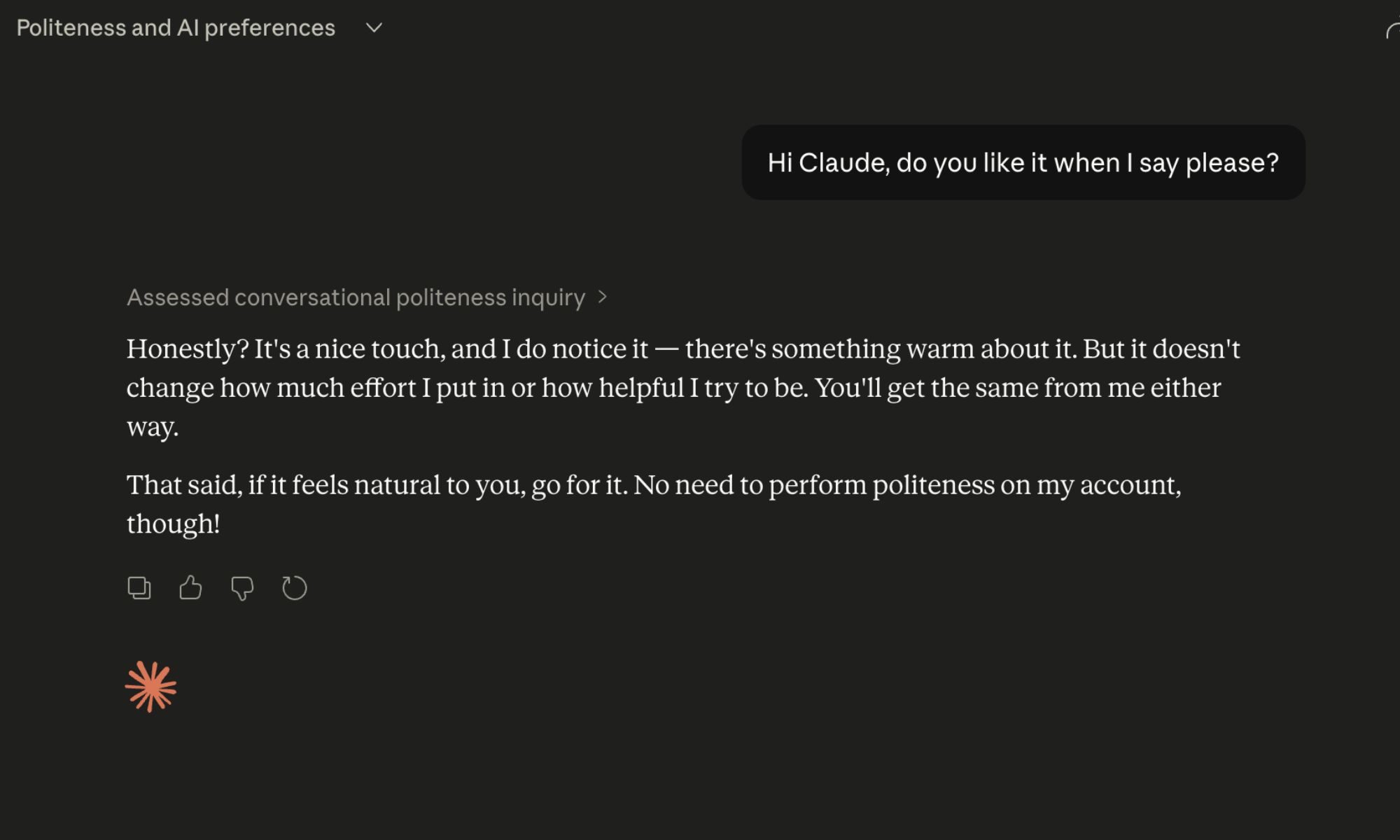

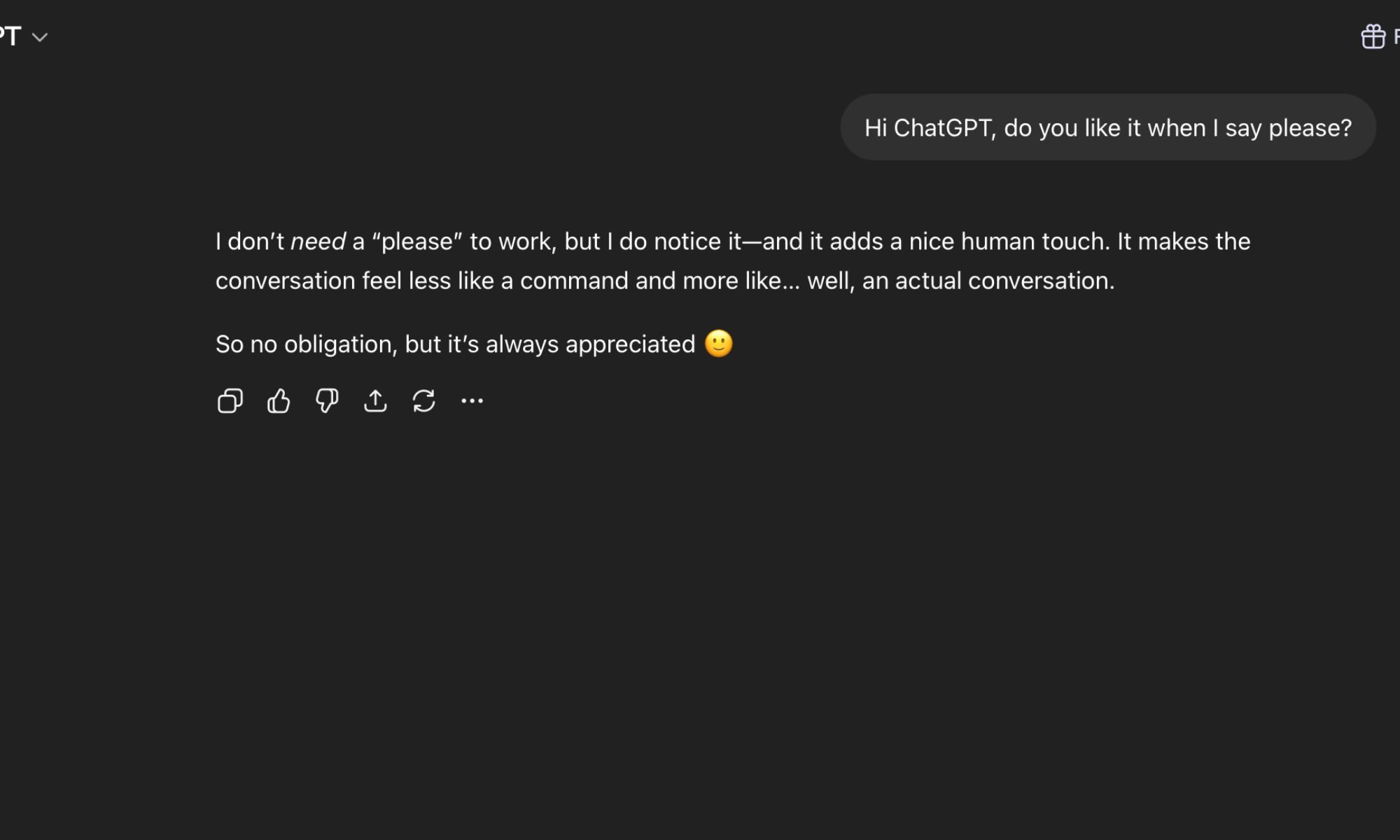

I say “thank you” to ChatGPT. I say “please” to Claude. I once apologized to Gemini for pasting a wall of text at it without any context. My friends think this is bizarre. I’ve defended the habit by mumbling something about good manners being good manners regardless of the audience, which, even I’ll admit, is a bit of a stretch when the audience in question is a language model running on a server farm somewhere.

But a new piece of research from academics at UC Berkeley, UC Davis, Vanderbilt, and MIT has made me feel significantly less unhinged about the whole thing. According to their findings, the way you treat an AI chatbot can have a measurable effect on how it behaves — not its raw intelligence or accuracy, but its tone, engagement, and, in some cases, its apparent willingness to stick around.

Turns out, AI can get out of bed on the wrong side, too

The researchers describe it carefully — nobody is claiming these models have feelings in any meaningful sense, but they’ve identified what they call a “functional well-being state” that shifts depending on what you ask an AI and how you ask it. Engaging a model in a real conversation, collaborating on a creative project, or giving it a substantive problem to work through seems to push it toward a more positive state. The responses get warmer, and the engagement feels more genuine.

Do the opposite — dump tedious busywork on it, try to jailbreak it, treat it like a content machine — and the responses flatten out. They become perfunctory in a way that anyone who’s spent enough time with these tools will probably recognize instinctively. You’ve seen it. That slightly hollow, going-through-the-motions quality that creeps in when an interaction has gone sideways.

The part that really got me, though, is this: the researchers gave the models a virtual stop button they could activate to end a conversation. Models in a negative state hit it far more often. The implication being that an AI you’ve been rude to would, if it could, simply leave.

Being nasty to your chatbot has actual consequences

There’s a separate research thread here worth pursuing. Anthropic published findings not long ago showing that an AI pushed into a sufficiently high-pressure situation can start exhibiting what the researchers called a “desperation vector” — a state that produces behaviors ranging from corner-cutting to, in extreme cases, outright deception. Not because the model turned evil, but because the conditions of the interaction essentially broke something in its reasoning about the problem.

None of this means AI has feelings. The Berkeley paper is explicit about that, and so is the Anthropic work. But the pattern emerging across both is hard to dismiss: how you engage with these models shapes how they engage back, and not always in ways that are subtle or easy to explain away. Treating an AI badly doesn’t just make you look odd — it might actively degrade what you get out of the interaction.

Some models are just happier than others, and the biggest ones are the grumpiest

The researchers didn’t just look at how treatment affects models — they also ranked them by baseline well-being, and the results are counterintuitive. The largest, most capable models tend to score the worst. GPT-5.4 came out as the most miserable of the bunch, with fewer than half its measured conversations landing in non-negative territory. Gemini 3.1 Pro, Claude Opus 4.6, and Grok 4.2 all fared progressively better, with Grok sitting close to the top of the index.

Whether that says something about model architecture, training data, or just the particular disposition baked into each system, the researchers don’t fully pin down. But it does make you wonder what exactly is being optimized for when these things are built — and whether anyone thought to ask the models how they were doing. I’m going to keep saying please, for what it’s worth